Scrapy is one of the most accessible tools that you can use to scrape and also spider a website with effortless ease.

Today lets see how we can scrape Reddit to get new posts from a subreddit like r/programming.

First, we need to install scrapy if you haven't already.

pip install scrapy

Once installed, go ahead and create a project by invoking the startproject command.

scrapy startproject scrapingproject

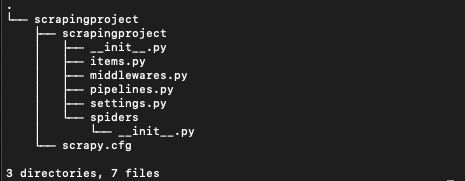

This will ouput something like this.

New Scrapy project 'scrapingproject', using template directory '/Library/Python/2.7/site-packages/scrapy/templates/project', created in:

/Applications/MAMP/htdocs/scrapy_examples/scrapingproject

You can start your first spider with:

cd scrapingproject

scrapy genspider example example.com

And create a folder structure like this...

Now CD into the scrapingproject. You will need to do it twice like this.

cd scrapingproject

cd scrapingproject

Now we need a spider to crawl through the programming subreddit. So we use the genspider to tell scrapy to create one for us. We call the spider ourfirstbot and pass it the url of the subreddit

scrapy genspider ourfirstbot www.reddit.com/r/programming/

this should return successfull like this

Created spider 'ourfirstbot' using template 'basic' in module:

scrapingproject.spiders.ourfirstbot

Great. Now open the file ourfirstbot.py in the spiders folder... it should look like this...

# -*- coding: utf-8 -*-

import scrapy

class OurfirstbotSpider(scrapy.Spider):

name = 'ourfirstbot'

allowed_domains = ['www.reddit.com/r/programming/']

start_urls = ['http://www.reddit.com/r/programming//']

def parse(self, response):

pass

Lets examine this code before we proceed...

The allowed_domains array restricts all further crawling to the domain paths specified here.

start_urls is the list of urls to crawl... for us, in this example, we only need one url.

The def parse(self, response): function is called by scrapy after every successfull url crawl. Here is where we can write our code to extract the data we want.

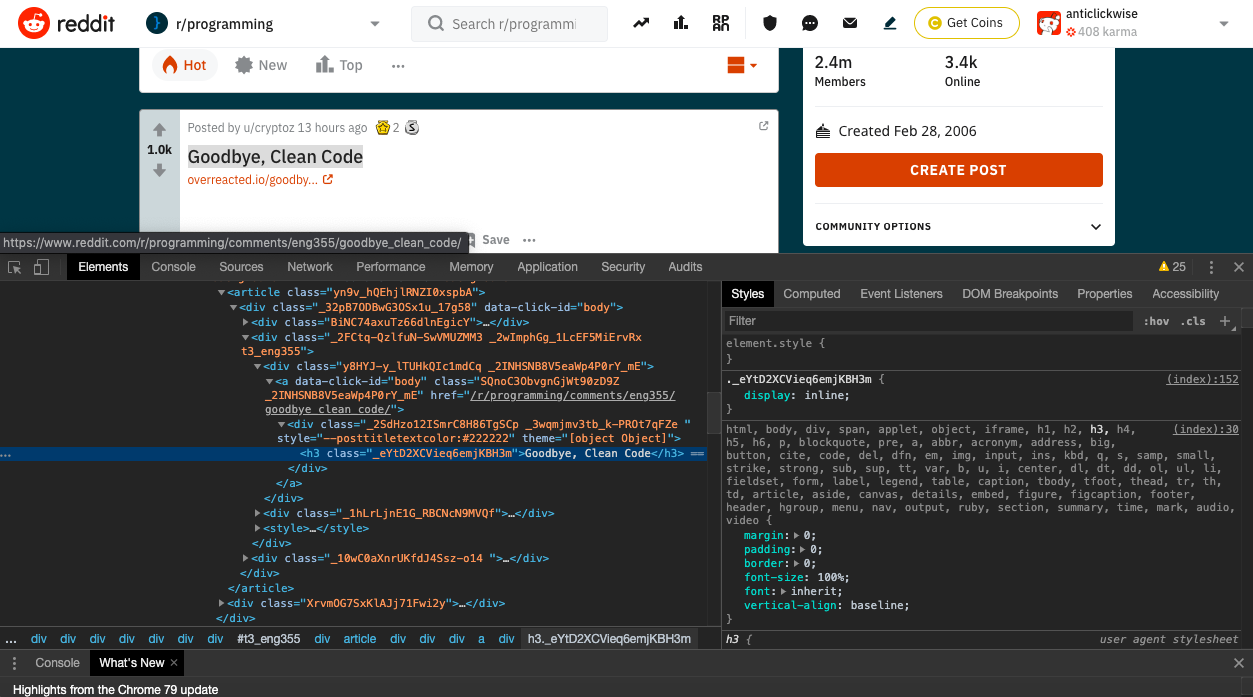

We now need to find the css selector of the elements we need to extract the data. Go to the url https://www.reddit.com/r/programming/ and right click on the Title of one of the posts and click on inspect. This will open thje Google Chrome Inspector like below...

You can see that the css class name of the title is _eYtD2XCVieq6emjKBH3m so we are going to ask to ask scrapy to get us the text property of this class like this.

titles = response.css('._eYtD2XCVieq6emjKBH3m::text').extract()

Similarly, we try and find the class names of the votes element and the number of comments element (note that the class names might change by the time you run this code.

votes = response.css('._1rZYMD_4xY3gRcSS3p8ODO::text').extract()

comments = response.css('.FHCV02u6Cp2zYL0fhQPsO::text').extract()

If you are unfaimiliar with css selectors, you can refer to this page by Scrapy https://docs.scrapy.org/en/latest/topics/selectors.html

We have to now use the zip function to map the similar index of multiple containers so that they can be used just using as single entity. so here is how it looks.

# -*- coding: utf-8 -*-

import scrapy

class OurfirstbotSpider(scrapy.Spider):

name = 'ourfirstbot'

start_urls = [

'https://www.reddit.com/r/programming/',

]

def parse(self, response):

#yield response

titles = response.css('._eYtD2XCVieq6emjKBH3m::text').extract()

votes = response.css('._1rZYMD_4xY3gRcSS3p8ODO::text').extract()

comments = response.css('.FHCV02u6Cp2zYL0fhQPsO::text').extract()

#Give the extracted content row wise

for item in zip(titles, votes, comments):

#create a dictionary to store the scraped info

all_items = {

'title' : item[0],

'vote' : item[1],

'comments' : item[2],

}

#yield or give the scraped info to scrapy

yield all_items

And now lets run this with the command .

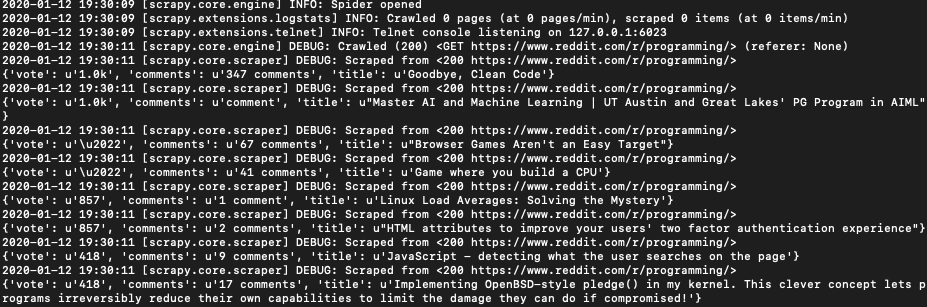

scrapy crawl ourfirstbotAnd Bingo... you get the results as below.

Now lets export the extracted data to a csv file. All you have to do is to provide the export file like this

scrapy crawl ourfirstbot -o data.csv

or if you want the data in the JSON format...

scrapy crawl ourfirstbot -o data.json

Scaling Scrapy

The example above is ok for small scale web crawling projects. But if you try to scrape large quantities of data at high speeds from websites like Reddit you will find that sooner or later your access will be restricted. Reddit can tell you are a bot so one of the things you can do is to run the crawler impersonating a web browser. This is done by passing the user agent string to the Reddit webserver so it doesnt block you.

Like this...

scrapy crawl ourfirstbot -s USER_AGENT="Mozilla/5.0 (Windows NT 6.1; WOW64)/

AppleWebKit/537.36 (KHTML, like Gecko) Chrome/34.0.1847.131 Safari/537.36" /

-s ROBOTSTXT_OBEY=False

In more advanced implementations you will need to even rotate this string so Reddit cant tell its the same browser! Welcome to web scraping.

If we get a little bit more advanced, you will realise that Reddit can simply block your IP ignoring all your other tricks. This is a bummer and this is where most web crawling projects fail.

Investing in a private rotating proxy service like Proxies API can most of the time make the difference between a successful and headache free web scraping project which gets the job done consistently and one that never really works.

Plus with the 1000 free API calls running offer, you have almost nothing to lose by using our rotating proxy and comparing notes. It only takes one line of integration to its hardly disruptive.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

With millions of high speed rotating proxies located all over the world,

With our automatic IP rotation

With our automatic User-Agent-String rotation (which simulates requests from different, valid web browsers and web browser versions)

With our automatic CAPTCHA solving technology,

hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

The whole thing can be accessed by a simple API like below in any programming language.

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"

We have a running offer of 1000 API calls completely free. Register and get your free API Key here.

Once you have an API_KEY from Proxies API, you just have to change your code to this...

# -*- coding: utf-8 -*-

import scrapy

class OurfirstbotSpider(scrapy.Spider):

name = 'ourfirstbot'

start_urls = [

'http://api.proxiesapi.com/?key=API_KEY&url=https://www.reddit.com/r/programming/',

]

def parse(self, response):

#yield response

titles = response.css('._eYtD2XCVieq6emjKBH3m::text').extract()

votes = response.css('._1rZYMD_4xY3gRcSS3p8ODO::text').extract()

comments = response.css('.FHCV02u6Cp2zYL0fhQPsO::text').extract()

#Give the extracted content row wise

for item in zip(titles, votes, comments):

#create a dictionary to store the scraped info

all_items = {

'title' : item[0],

'vote' : item[1],

'comments' : item[2],

}

#yield or give the scraped info to scrapy

yield all_items

We have only changed one line at the start_urls array and that will make sure we will never have to worry about IP rotation, user agent string rotation or even rate limits ever again.