Today we are going to see how we can scrape New York Times articles using Python and BeautifulSoup is a simple and elegant manner.

This article aims to get you started on a real-world problem solving while keeping it super simple, so you get familiar and get practical results as fast as possible.

So the first thing we need is to make sure we have Python 3 installed. If not, you can just get Python 3 and get it installed before you proceed.

Then you can install beautiful soup.

pip3 install beautifulsoup4We will also need the library's requests, lxml, and soupsieve to fetch data, break it down to XML, and to use CSS selectors. Install them using.

pip3 install requests soupsieve lxmlOnce installed, open an editor and type in.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requestsNow let's go to the NYT home page and inspect the data we can get.

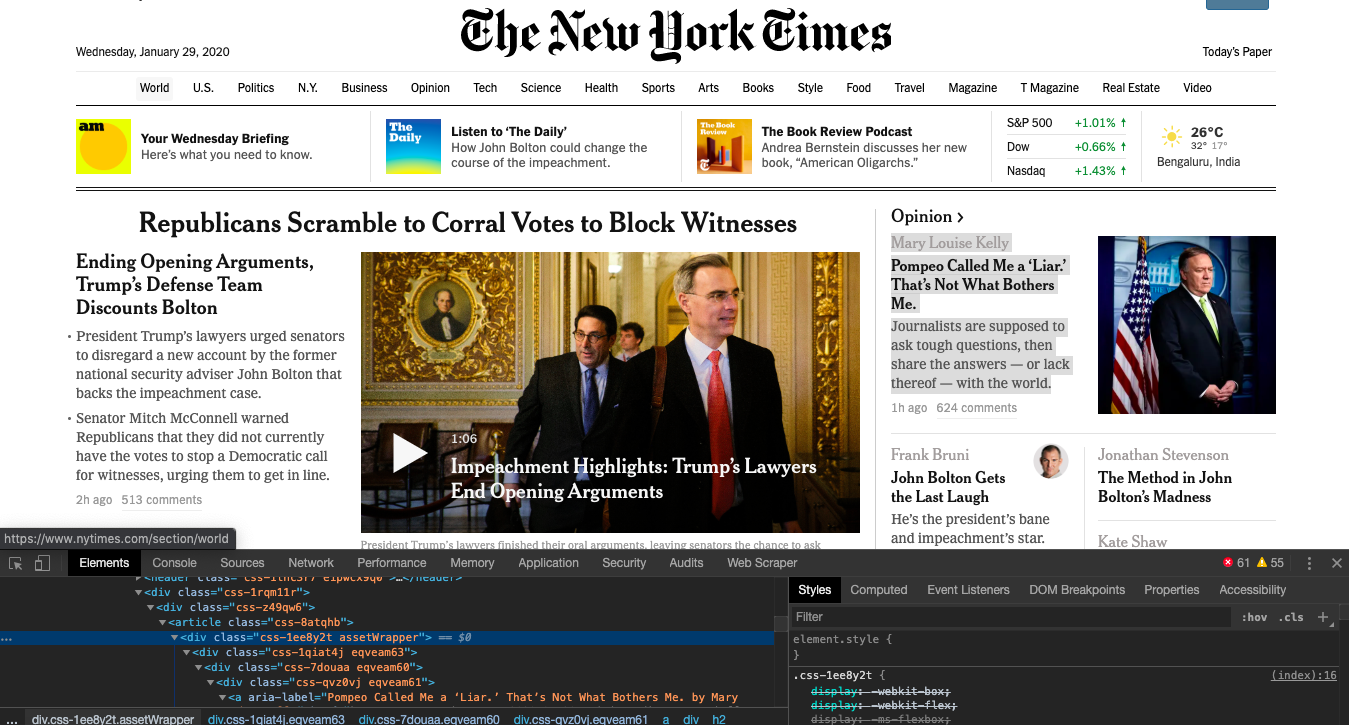

This is how it looks.

Back to our code now. Let's try and get this data by pretending we are a browser like this.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.nytimes.com/'

response=requests.get(url,headers=headers)

print(response)Save this as nyt_bs.py.

If you run it

python3 nyt_bs.pyYou will see the whole HTML page.

Now let's use CSS selectors to get to the data we want. To do that, let's go back to Chrome and open the inspect tool. We now need to get to all the articles. We notice that the

If you notice that the article title is contained in an element inside the assetWrapper class, we can get to it like this.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.nytimes.com/'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

#print(soup.select('.Post')[0].get_text())

for item in soup.select('.assetWrapper'):

try:

print('----------------------------------------')

headline = item.find('h2').get_text()

print(headline)

except Exception as e:

#raise e

print('')

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.nytimes.com/'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

#print(soup.select('.Post')[0].get_text())

for item in soup.select('.assetWrapper'):

try:

print('----------------------------------------')

headline = item.find('h2').get_text()

print(headline)

except Exception as e:

#raise e

print('')This selects all the assetWrapper article blocks and runs through them, looking for the element and printing its text.

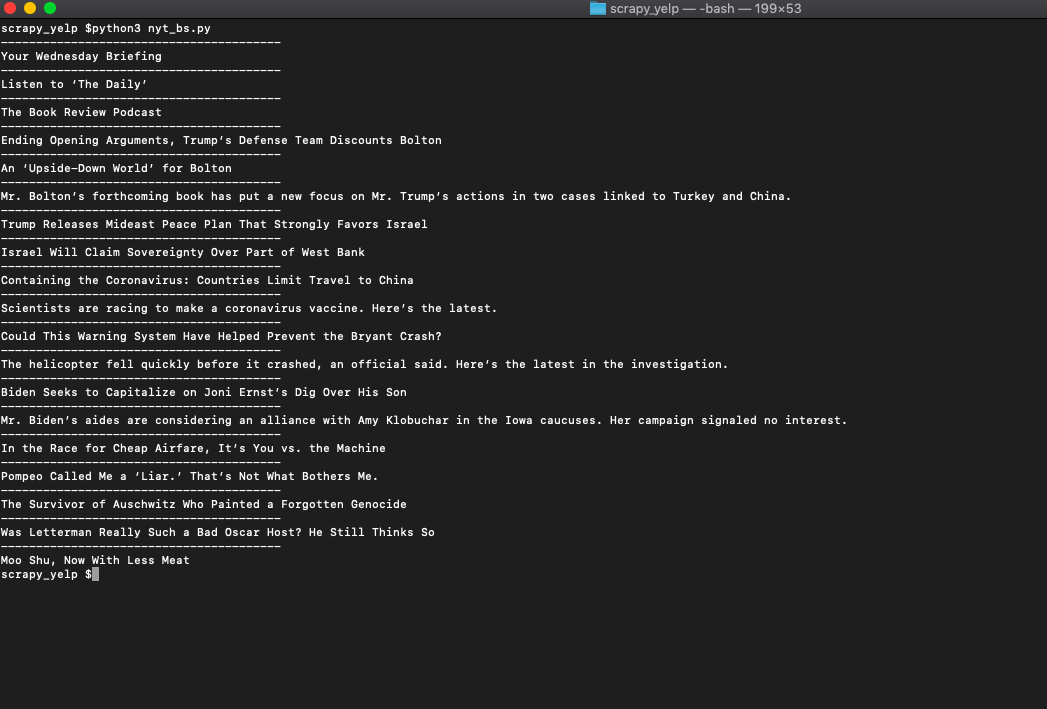

So when you run it, you get.

Bingo!! We got the article titles.

Now with the same process, we get the class names of all the other data like article link and article summary.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.nytimes.com/'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

#print(soup.select('.Post')[0].get_text())

for item in soup.select('.assetWrapper'):

try:

print('----------------------------------------')

headline = item.find('h2').get_text()

link = item.find('a')['href']

summary = item.find('p').get_text()

print(headline)

print(link)

print(summary)

except Exception as e:

#raise e

print('')

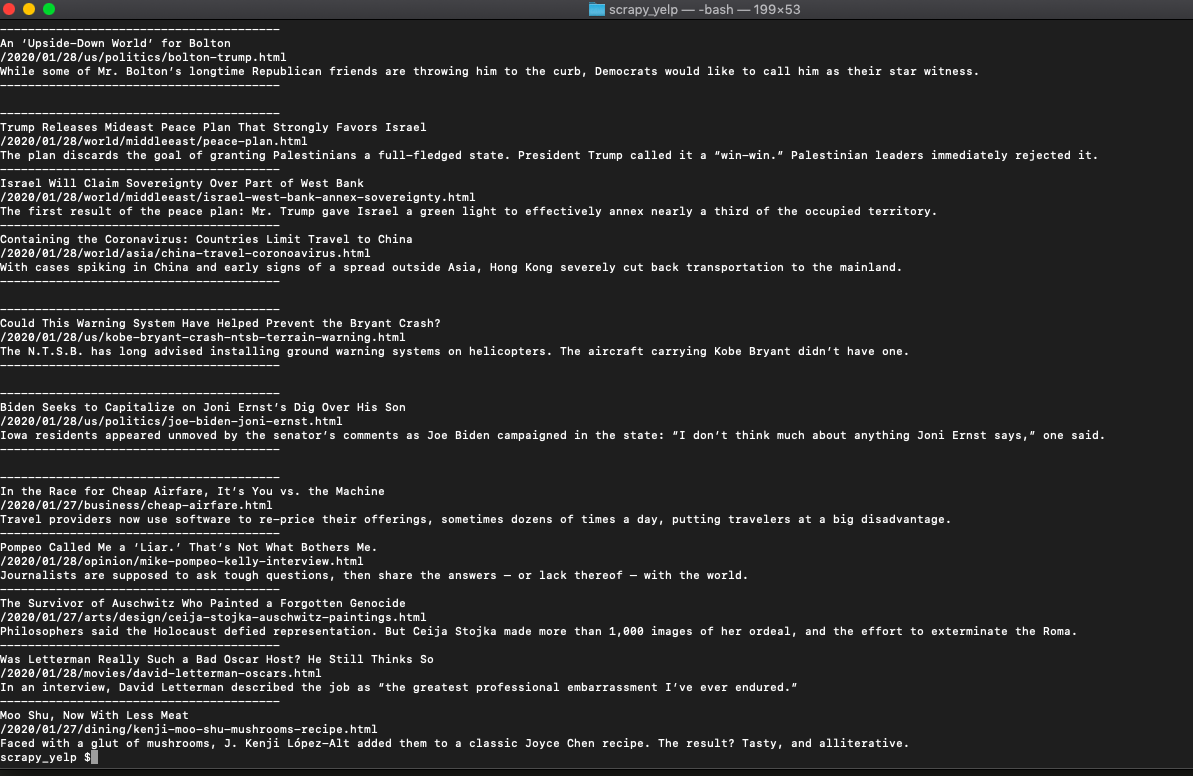

That, when run, should print everything we need from each article like this.

If you want to use this in production and want to scale to thousands of links, then you will find that you will get IP blocked quickly by the New York Times. In this scenario, using a rotating proxy service to rotate IPs is almost a must.

Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

- With millions of high speed rotating proxies located all over the world

- With our automatic IP rotation

- With our automatic User-Agent-String rotation (which simulates requests from different, valid web browsers and web browser versions)

- With our automatic CAPTCHA solving technology

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

A simple API can access the whole thing like below in any programming language.

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"We have a running offer of 1000 API calls completely free. Register and get your free API Key here.