Web scraping can automate data collection from websites. In this comprehensive tutorial, we'll scrape a Wikipedia page to extract dog breed information and images using Node.js.

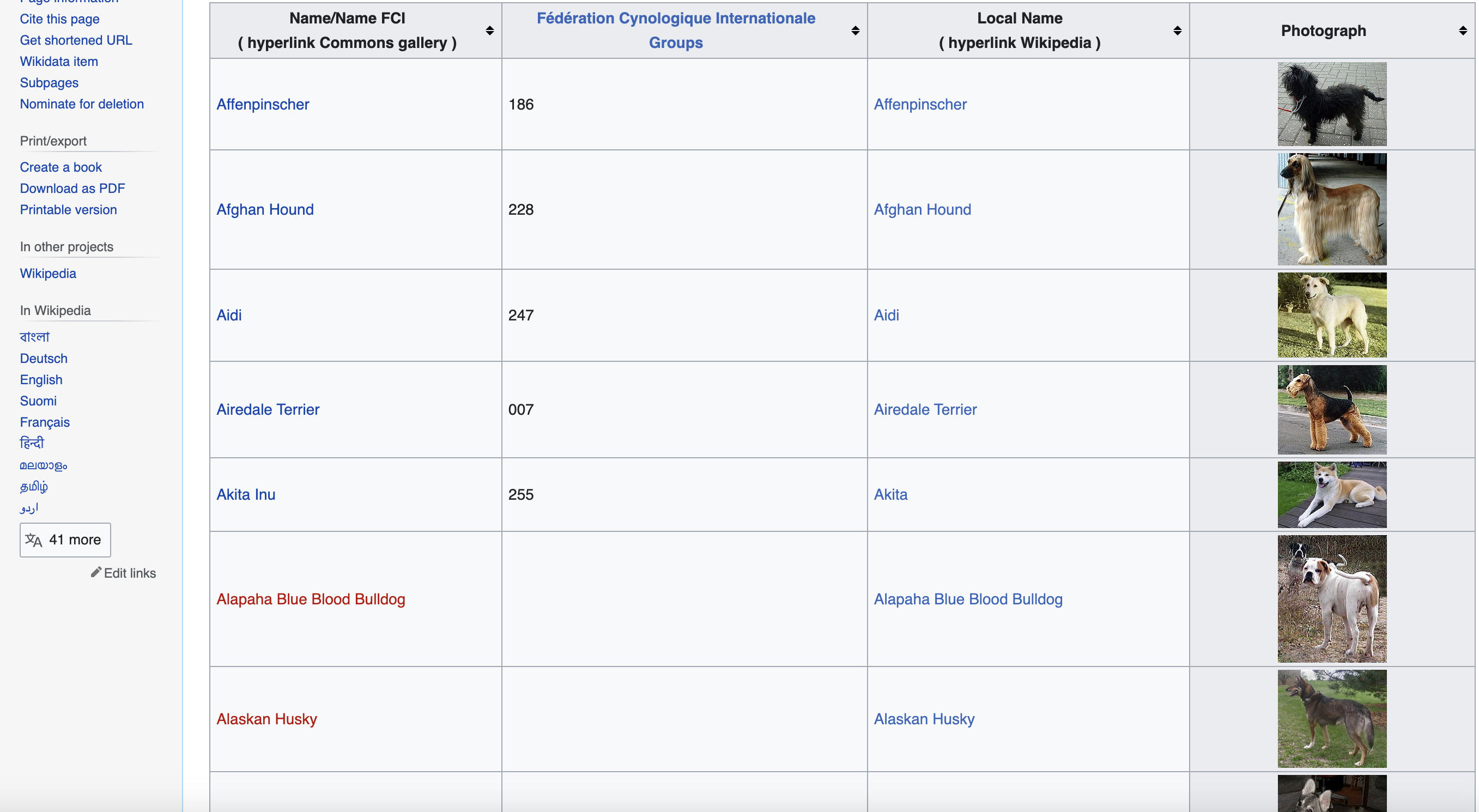

This is page we are talking about…

Getting Set Up

We'll use these Node modules:

npm install axios cheerio fs

const fs = require('fs');

const axios = require('axios');

const cheerio = require('cheerio');

axios makes HTTP requests

cheerio parses HTML and helps query/manipulate DOM

fs provides file system methods

Defining the Target URL

We'll scrape the Wikipedia List of Dog Breeds page:

const url = '<https://commons.wikimedia.org/wiki/List_of_dog_breeds>';

We'll also define a User-Agent header to mimic a real browser request:

const headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

};

Making the HTTP Request

Let's use axios to fetch the page content:

axios.get(url, {headers})

.then(response => {

// Request succeeded, let's scrape!

})

.catch(error => {

// Handle errors

});

On success, the full HTML is in

Loading and Parsing with Cheerio

To query/manipulate DOM elements, we need to load raw HTML into cheerio:

const $ = cheerio.load(response.data);

The

Extracting the Main Data Table

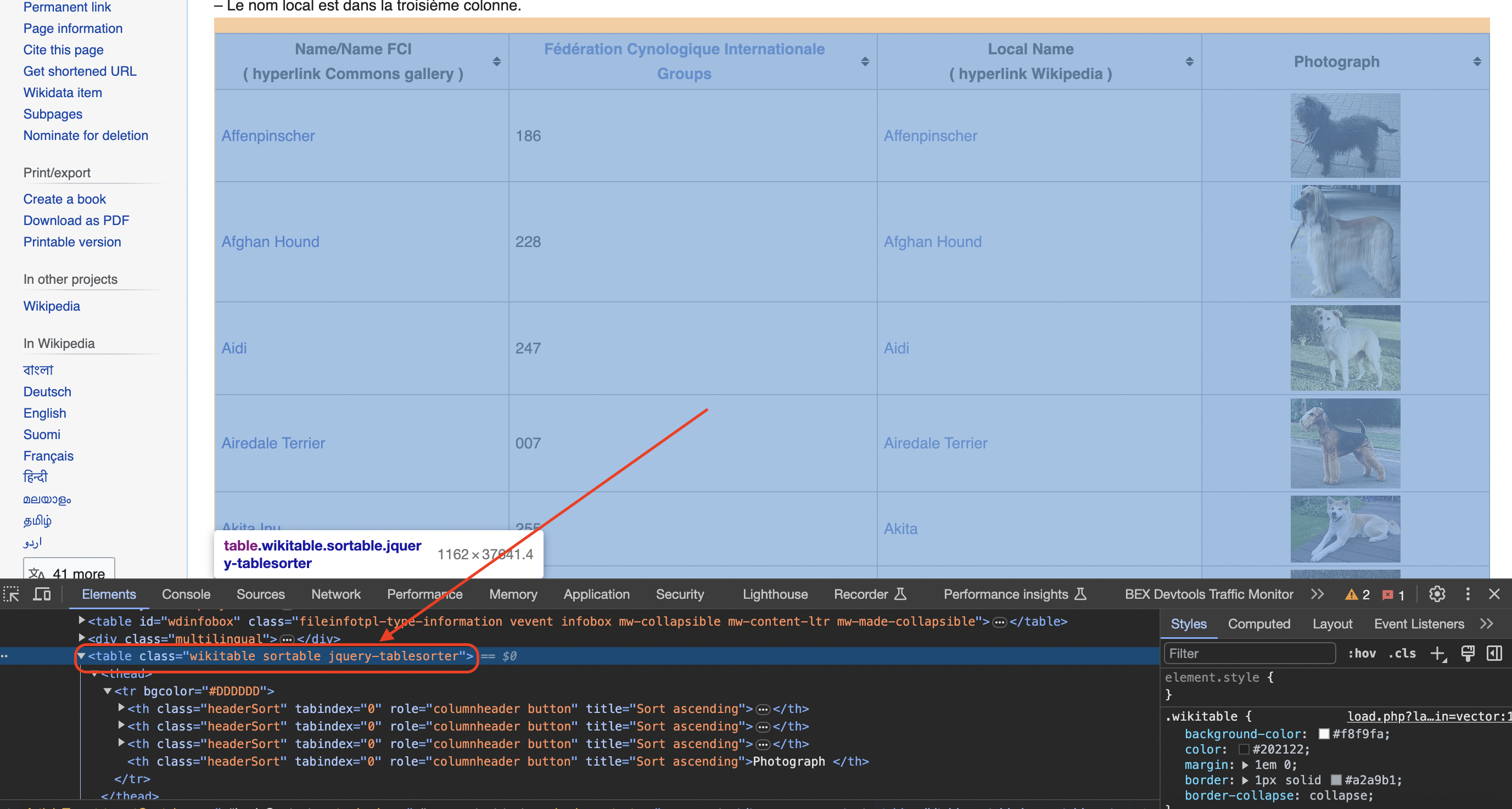

Inspecting the page

You can see when you use the chrome inspect tool that the data is in a table element with the class wikitable and sortable

We can reference it like this:

const table = $('table.wikitable.sortable');

Initializing Data Arrays

Let's initialize arrays to store extracted data:

const names = [];

const groups = [];

const localNames = [];

const photographs = [];

We'll also create a folder to save images:

if (!fs.existsSync('dog_images')) {

fs.mkdirSync('dog_images');

}

Scraping Row Data

We can loop through the rows and use selectors to extract cell data:

$('tr', table).each((index, row) => {

const columns = $('td, th', row);

// Skip header

if (columns.length === 4) {

const name = $('a', columns.eq(0)).text();

const group = columns.eq(1).text();

const localName = $('span', columns.eq(2)).text() || '';

const img = columns.eq(3).find('img');

const photograph = img.attr('src') || '';

// Extract and store data

}

});

Key selectors:

Downloading and Saving Images

For each image link we find, we can download the image file:

if (photograph) {

axios.get(photograph, {responseType: 'arraybuffer'})

.then(response => {

fs.writeFileSync(`dog_images/${name}.jpg`, response.data);

});

}

So for each row, we've now extracted the key data and images into organized arrays and files!

From here you might:

Here is the full code:

const fs = require('fs');

const axios = require('axios');

const cheerio = require('cheerio');

// URL of the Wikipedia page

const url = 'https://commons.wikimedia.org/wiki/List_of_dog_breeds';

// Define a user-agent header to simulate a browser request

const headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

};

// Send an HTTP GET request to the URL with the headers

axios.get(url, { headers })

.then(response => {

if (response.status === 200) {

// Load the HTML content of the page using cheerio

const $ = cheerio.load(response.data);

// Find the table with class 'wikitable sortable'

const table = $('table.wikitable.sortable');

// Initialize arrays to store the data

const names = [];

const groups = [];

const localNames = [];

const photographs = [];

// Create a folder to save the images

if (!fs.existsSync('dog_images')) {

fs.mkdirSync('dog_images');

}

// Iterate through rows in the table (skip the header row)

$('tr', table).each((index, row) => {

const columns = $('td, th', row);

if (columns.length === 4) {

// Extract data from each column

const name = $('a', columns.eq(0)).text().trim();

const group = columns.eq(1).text().trim();

// Check if the second column contains a span element

const spanTag = columns.eq(2).find('span');

const localName = spanTag.text().trim() || '';

// Check for the existence of an image tag within the fourth column

const imgTag = columns.eq(3).find('img');

const photograph = imgTag.attr('src') || '';

// Download the image and save it to the folder

if (photograph) {

axios.get(photograph, { responseType: 'arraybuffer' })

.then(imageResponse => {

if (imageResponse.status === 200) {

const imageFilename = `dog_images/${name}.jpg`;

fs.writeFileSync(imageFilename, imageResponse.data);

}

})

.catch(error => {

console.error('Failed to download image:', error);

});

}

// Append data to respective arrays

names.push(name);

groups.push(group);

localNames.push(localName);

photographs.push(photograph);

}

});

// Print or process the extracted data as needed

for (let i = 0; i < names.length; i++) {

console.log("Name:", names[i]);

console.log("FCI Group:", groups[i]);

console.log("Local Name:", localNames[i]);

console.log("Photograph:", photographs[i]);

console.log();

}

} else {

console.log("Failed to retrieve the web page. Status code:", response.status);

}

})

.catch(error => {

console.error("Error:", error);

});In more advanced implementations you will need to even rotate the User-Agent string so the website cant tell its the same browser!

If we get a little bit more advanced, you will realize that the server can simply block your IP ignoring all your other tricks. This is a bummer and this is where most web crawling projects fail.

Overcoming IP Blocks

Investing in a private rotating proxy service like Proxies API can most of the time make the difference between a successful and headache-free web scraping project which gets the job done consistently and one that never really works.

Plus with the 1000 free API calls running an offer, you have almost nothing to lose by using our rotating proxy and comparing notes. It only takes one line of integration to its hardly disruptive.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

The whole thing can be accessed by a simple API like below in any programming language.

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"We have a running offer of 1000 API calls completely free. Register and get your free API Key here.